See my recap of the recent EuroSciPy, published on https://data.blog

In which Boris Gorelik shares his favorite talks and workshops from EuroSciPy 2018.

See my recap of the recent EuroSciPy, published on https://data.blog

In which Boris Gorelik shares his favorite talks and workshops from EuroSciPy 2018.

If you ever gave or attended a presentation, you are familiar with this situation: the presenter asks whether there are any questions and … nobody asks anything. This is an awkward situation. Why aren’t there any questions? Is it because everything is clear? Not likely. Everything is never clear. Is it because nobody cares? Well, maybe. There are certainly many people that don’t care. It’s a fact of life. Study your audience, work hard to make the presentation relevant and exciting but still, some people won’t care. Deal with it.

However, the bigger reasons for lack of the questions are human laziness and the fear of being stupid. Nobody likes asking a question that someone will perceive as a stupid one. Sometimes, some people don’t mind asking a question but are embarrassed and prefer not being the first one to break the silence.

What can you do? Usually, I prepare one or two questions by myself. In this case, if nobody asks anything, I say something like “Some people, when they see these results ask me whether it is possible to scale this method to larger sets.”. Then, depending on how confident you are, you may provide the answer or ask “What do you think?”.

You can even prepare a slide that answers your question. In the screenshot below, you may see the slide deck of the presentation I gave in Trento. The blue slide at the end of the deck is the final slide, where I thank the audience for the attention and ask whether there are any questions.

My plan was that if nobody asks me anything, I would say “Thank you again. If you want to learn more practical advises about data visualization, watch the recording of my tutorial, where I present this method <SLIDE TRANSFER, show the mockup of the “book”>. Also, many people ask me about reading suggestions, this is what I suggest you read: <SLIDE TRANSFER, show the reading pointers>

Luckily for me, there were questions after my talk. Luckily, one of these questions was about practical advice so I had a perfect excuse to show the next, pre-prepared, slide. Watch this moment on YouTube here.

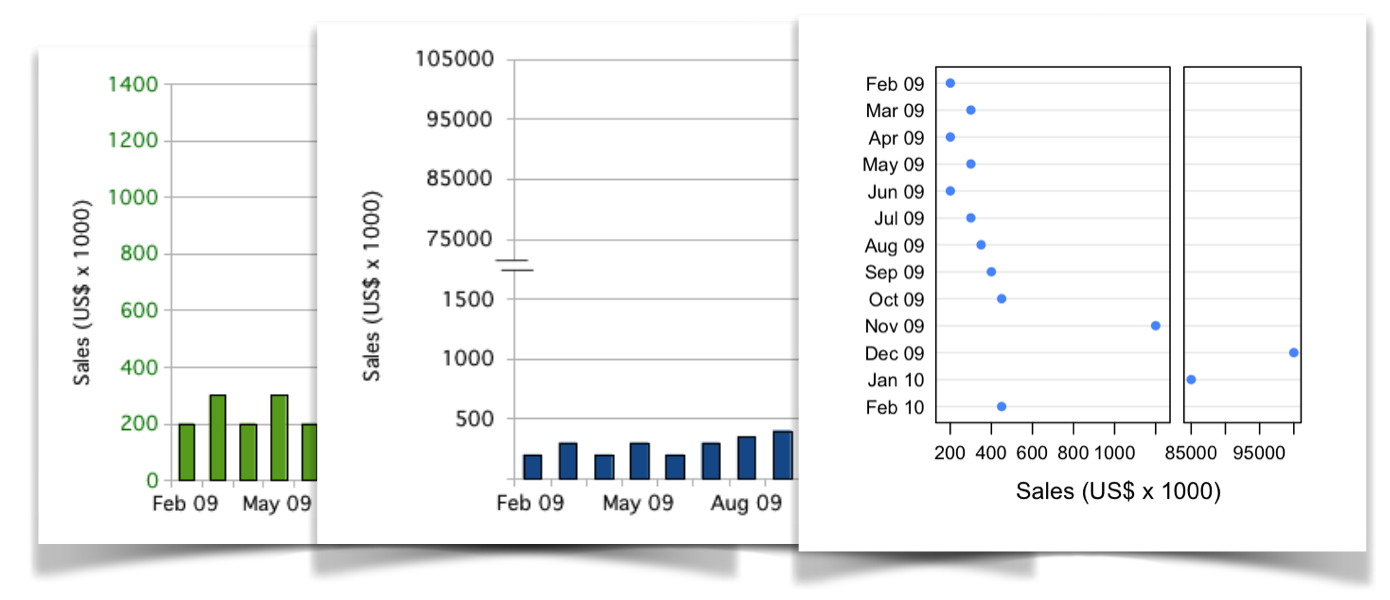

My colleague, Chares Earl, pointed me to this interesting 2010 post that explores different ways to visualize categories of drastically different sizes.

The post author, Tom Hopper, experiments with different ways to deal with “Data Giraffes”. Some of his experiments are really interesting (such as splitting the graph area). In one experiment, Tom Hopper draws bar chart on a log scale. Doing so is considered as a bad practice. Bar charts value (Y) axis must include meaningful zero, which log scale can’t have by its definition.

Other than that, a good read Graphing Highly Skewed Data – Tom Hopper

About a week ago, I met Justin Mayer and had a really interesting chat with him about internet privacy. Today, his 30-minutes talk on that subject appeared in my youtube suggestion list

https://www.youtube.com/watch?v=2rrP_aW-jNA

How ironic. The talk, by the way, is very interesting.

I wonder how this analysis of remained unnoticed by the social media

The recent election of Doug Jones […] got me thinking: What if the Black populations of Southern cities were to experience a dramatic increase? How many other elections would be impacted?

via Back to Mississippi: Black migration in the 21st century — Charlescearl’s Weblog

Please leave a comment to this post. It doesn’t matter what. It doesn’t matter when or where you see it. I want to see how many real people are actually reading this blog.

[caption id=”attachment_media-15” align=”alignnone” width=”1880”]

Photo by Pixabay on Pexels.com[/caption]

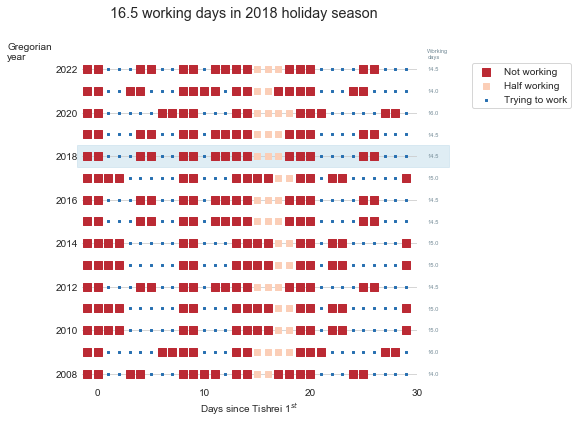

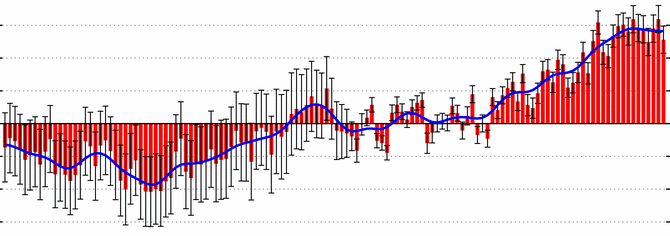

Tishrei is the seventh month of the Hebrew calendar that starts with Rosh-HaShana — the Hebrew New Year. It is a 30 days month that usually occurs in September-October. One interesting feature of Tishrei is the fact that it is full of holidays: Rosh-HaShana (New Year), Yom Kippur (Day of Atonement), first and last days of Sukkot (Feast of Tabernacles) **. All these days are rest days in Israel. Every holiday eve is also a *de facto rest day in many industries (high tech included). So now we have 8 resting days that add to the usual Friday/Saturday pairs, resulting in very sparse work weeks. But that’s not all: the period between the first and the last Sukkot days are mostly considered as half working days. Also, the children are at home since all the schools and kindergartens are on vacation so we will treat those days as half working days in the following analysis.

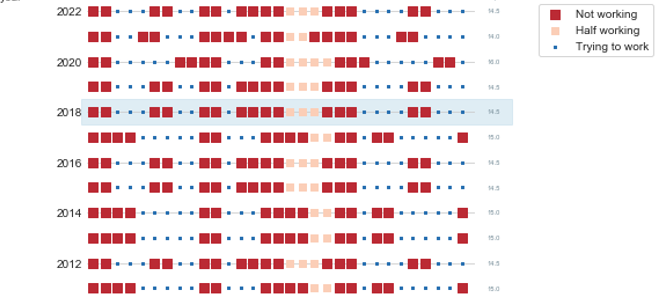

I have counted the number of business days during this 31-day period (one day before the New Year plus the entire month of Tishrei) between 2008 and 2023 CE, and this is what we get:

Overall, this period consists of between 15 to 17 non-working days in a single month (31 days, mind you). This is how the working/not-working time during this month looks like this:

Now, having some vacation is nice, but this month is absolutely crazy. There is not a single full working week during this month. It is very similar to the constantly interrupt work day, but at a different scale.

So, next time you wonder why your Israeli colleague, customer or partner barely works during September-October, recall this post.

(*) New Year starts in the seventh’s month? I know this is confusing. That’s because we number Nissan – the month of the Exodus from Egypt as the first month.

(**)If you are an observing Jew, you should add to this list Fast of Gedalia, but we will omit it from this discussion

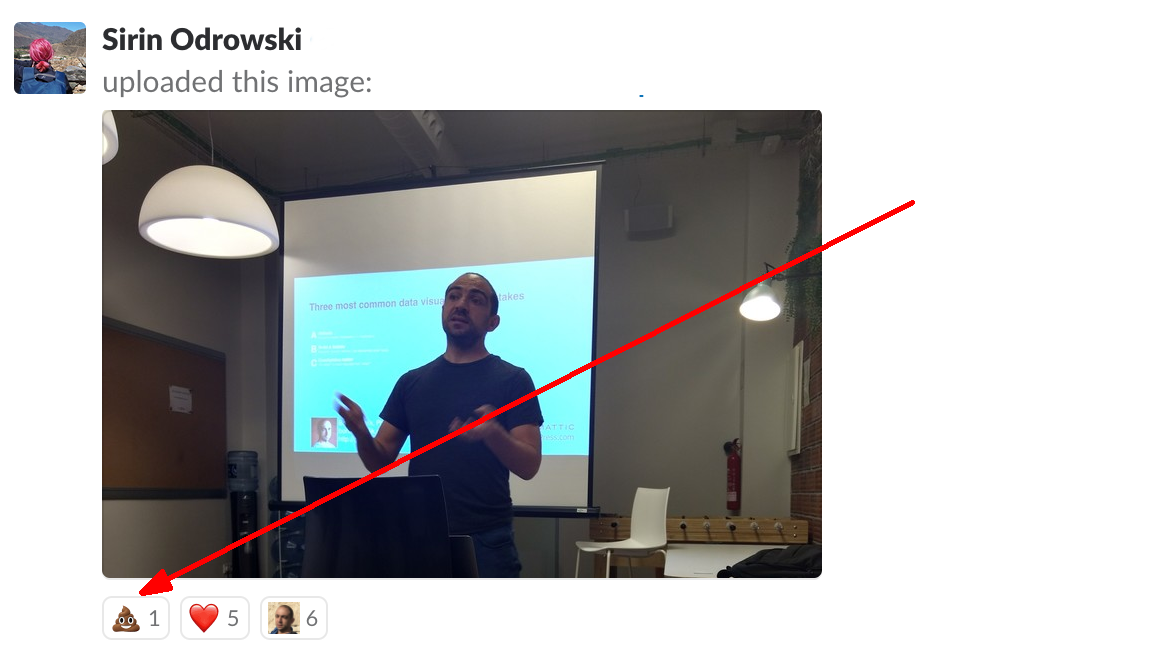

Today, during the EuroSciPy conference, I gave a presentation titled “Three most common mistakes in data visualization and how to avoid them”. The title of this presentation is identical to the title of the presentation that I gave in Barcelona earlier this year. The original presentation was approximately one and a half hours long. I knew that EuroSciPy presentations were expected to be shorter, so I was prepared to shorten my talk to half an hour. At some point, a couple of days before departing to Trento, I realized that I was only allocated 15 minutes. Fifteen minutes! Instead of ninety.

Frankly speaking, I was in a panic. I even considered contacting EuroSciPy organizers and asking them to remove my talk from the program. But I was too embarrassed, so I decided to take the risk and started throwing slides away. Overall, I think that I spent eight to ten working hours shortening my presentation. Today, I finally presented it. Based on the result, and on the feedback that I got from the conference audience, I now know that the 15-minutes version is better than the original, longer one. Video recording of my talk is available on Youtube and is embedded below. Below is my slide deck

[slideshare id=112261825&doc=20180830abcthreemostcommonmistakescopy-180830134825]

Illustration image credit: Photo by Jo Szczepanska on Unsplash

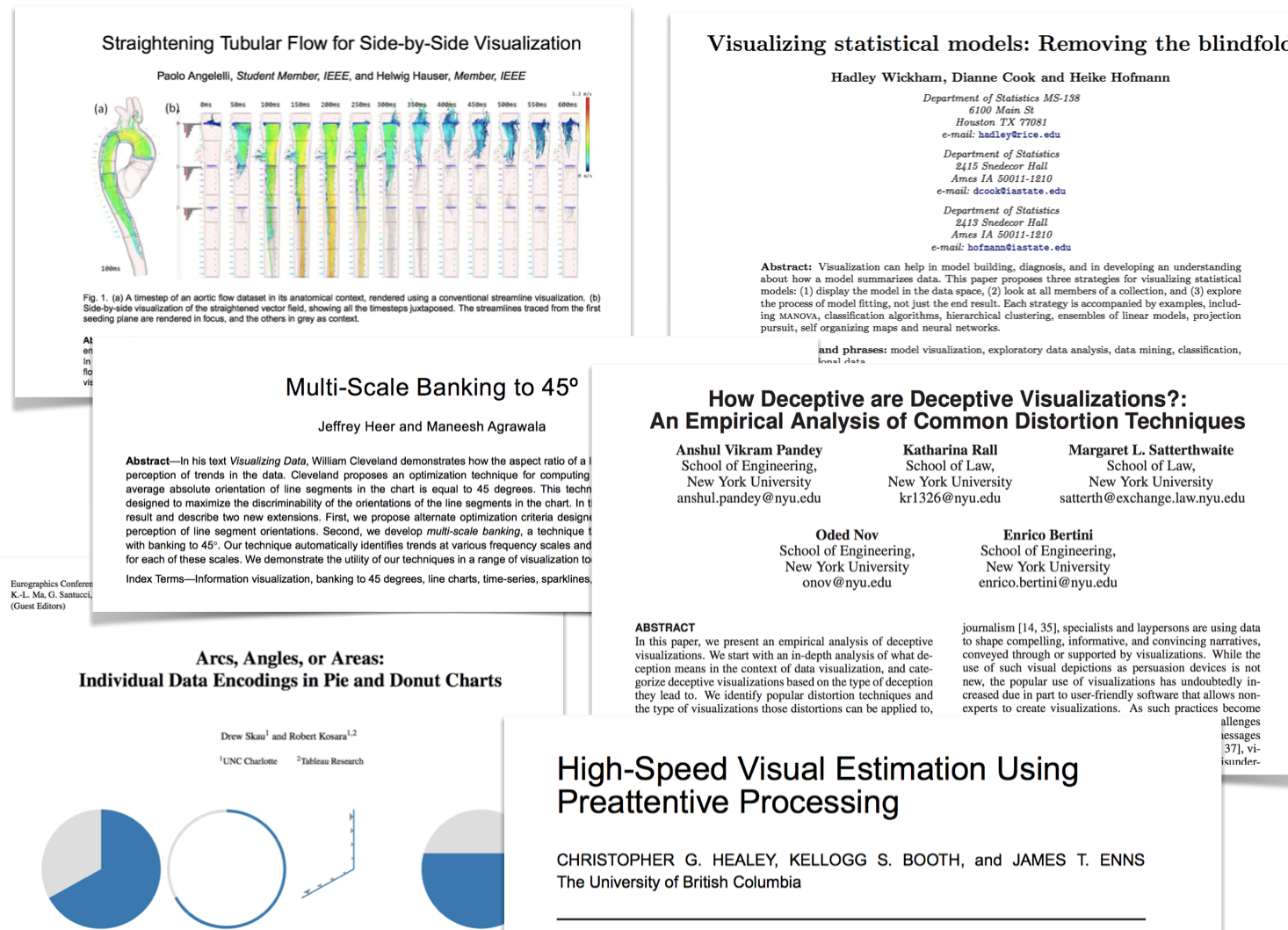

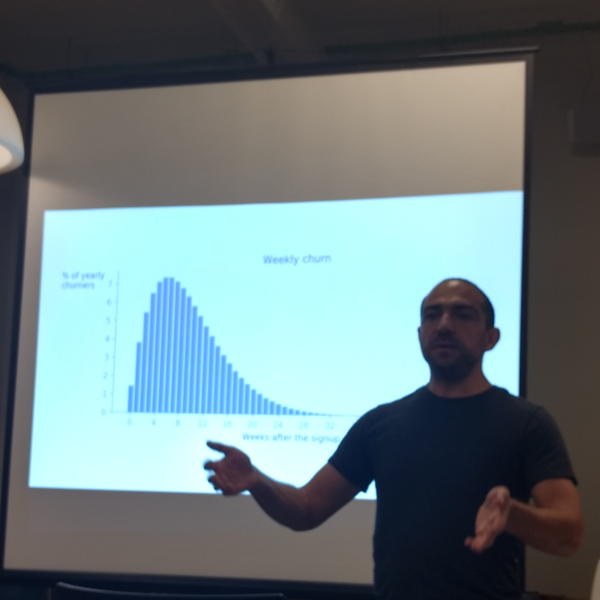

Yesterday, I gave a data visualization workshop at EuroSciPy 2018 in Trento. I spent HOURs building and improving it. I even developed a “simple to use, easy to follow, never failing formula” for data visualization process (I’ll write about it later).

I enjoyed this workshop so much. Both preparing it, and (even more so) delivering it. There were so many useful questions and remarks. The most important remark was made by Gael Varoquaux who pointed out that one of my examples was suboptimal for vision impaired people. The embarrassing part is that one of the last lectures that I gave in my college data visualization course was about visual communication for the visually impaired. That is why the first thing I did when I came to my hotel after the workshop was to fix the error. You may find all the (corrected) material I used in this workshop on GitHub. Below, is the video of the workshop, in case you want to follow it.

https://www.youtube.com/watch?v=H-K_fSA54AM

Photo credit: picture of me delivering the workshop is by Margriet Groenendijk

I am excited to run a data visualization tutorial, and to give a data visualization talk during the 2018 EuroSciPy meeting in Trento, Italy.

My tutorial “Data visualization – from default and suboptimal to efficient and awesome”will take place on Sep 29 at 14:00. This is a two-hours tutorial during which I will cover between two to three examples. I will start with the default Matplotlib graph, and modify it step by step, to make a beautiful aid in technical communication. I will publish the tutorial notebooks immediately after the conference.

My talk “Three most common mistakes in data visualization” will be similar in nature to the one I gave in Barcelona this March, but more condensed and enriched with information I learned since then.

If you plan attending EuroSciPy and want to chat with me about data science, data visualization, or remote working, write a message to boris@gorelik.net.

Full conference program is available here.

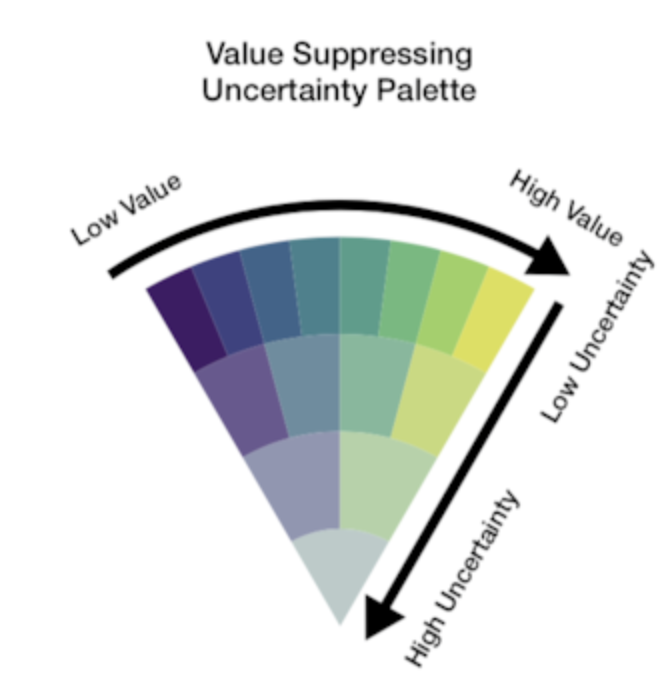

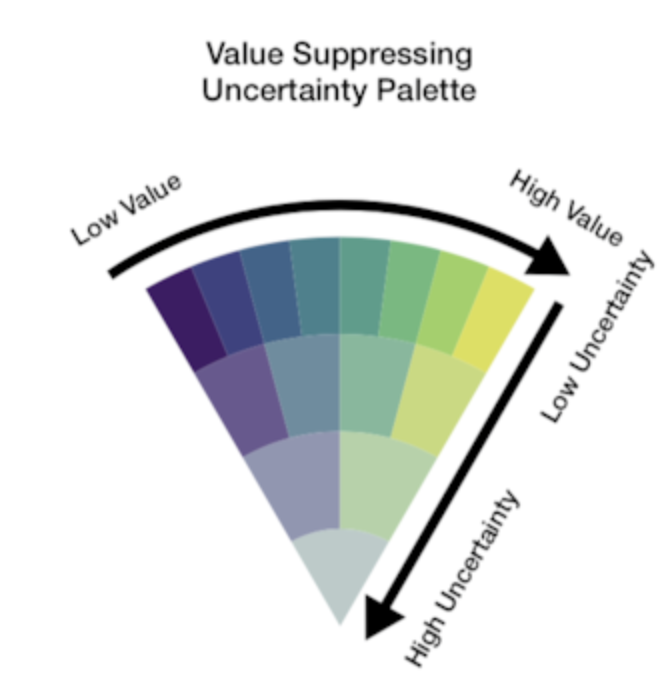

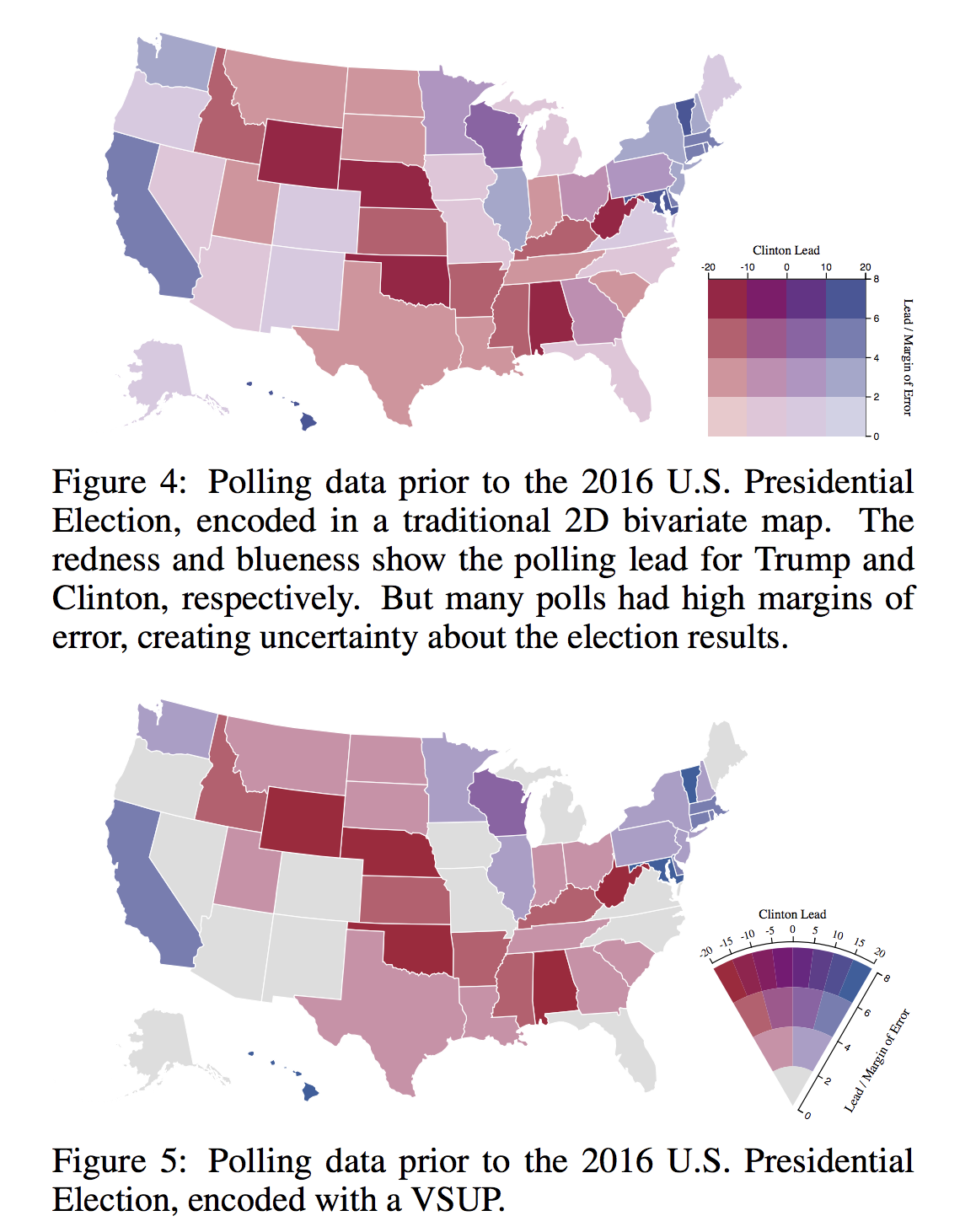

Uncertainty is one of the most neglected aspects of number-based communication and one of the most important concepts in general numeracy. Comprehending uncertainty is hard. Visualizing it is, apparently, even harder.

Last week I read a paper called Value-Suppressing Uncertainty Palettes, by M.Correll, D. Moritz, and J. Heer from the Data visualization and interactive analysis research at the University of Washington. This paper describes an interesting approach to color-encoding uncertainty.

Uncertainty visualization is commonly done by reducing color saturation and opacity. Cornell et al suggest combining saturation reduction with limiting the number of possible colors in a color palette. Unfortunately, there the authors used Javascript and not python for this paper, which means that in the future, I might try implementing it in python.

Visualizing uncertainty is one of the most challenging tasks in data visualization. Uncertain

via Value-Suppressing Uncertainty Palettes – UW Interactive Data Lab – Medium

Excellent piece (part one of three) about time series analysis by my colleague Carly Stambaugh

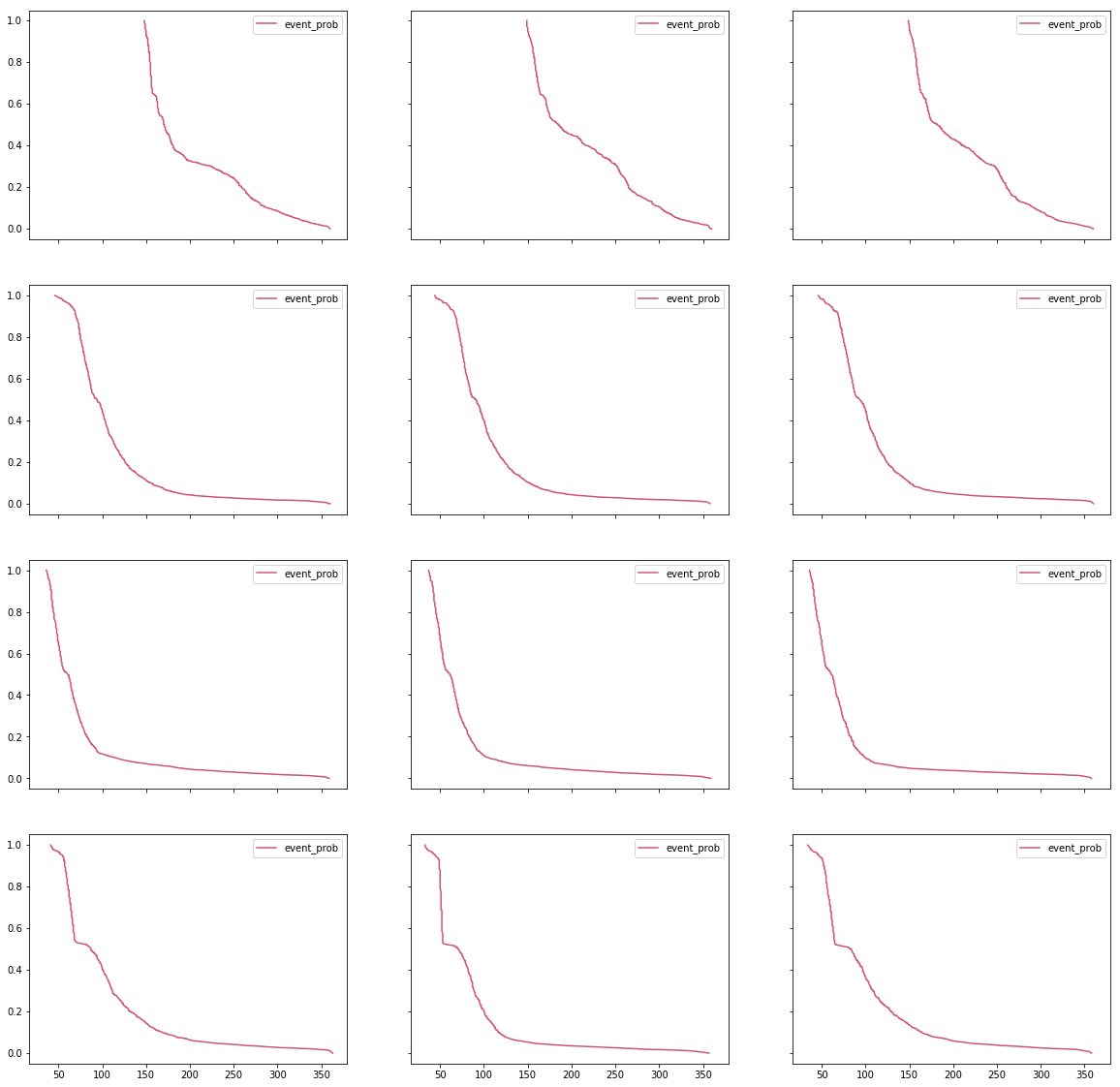

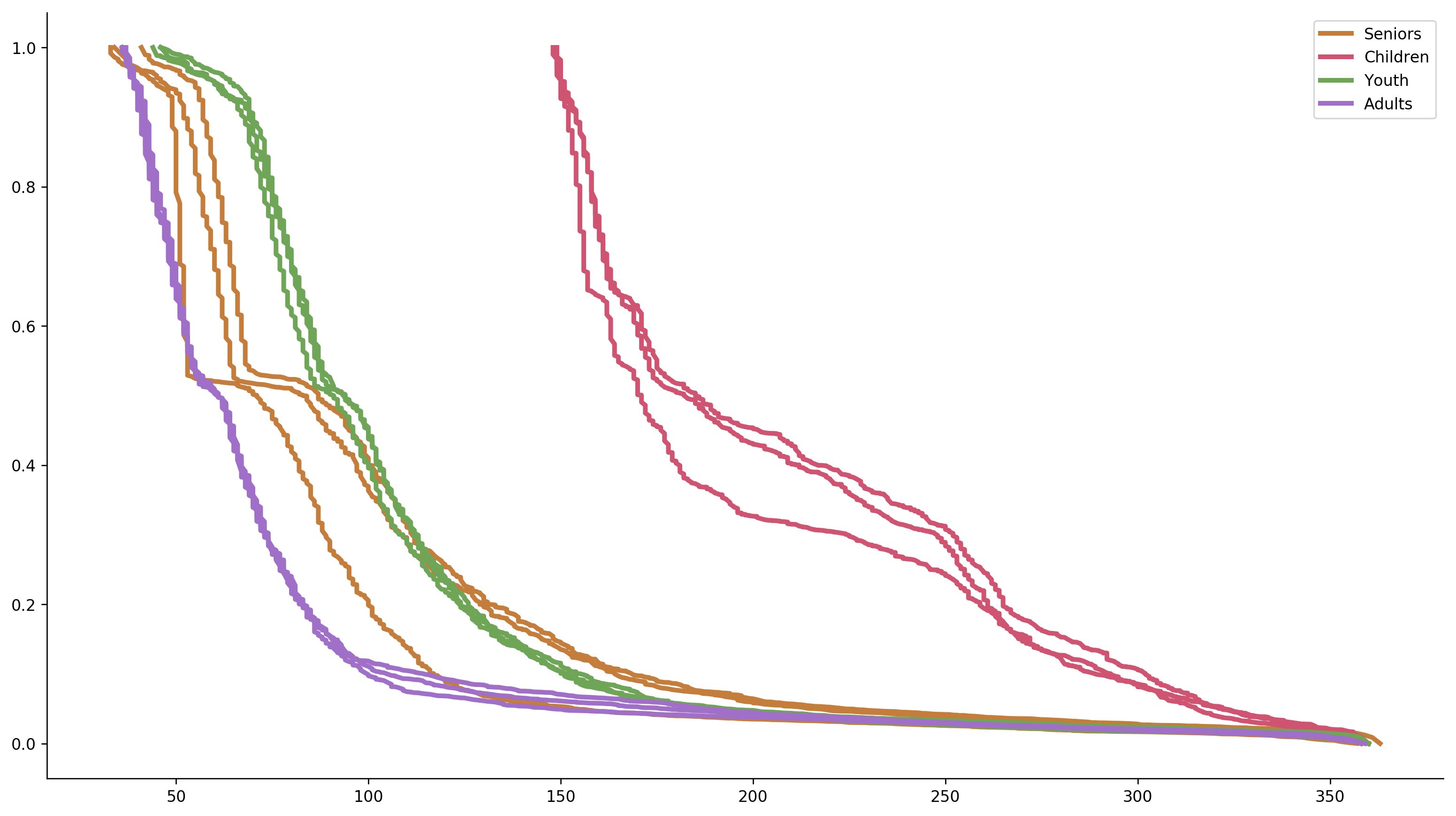

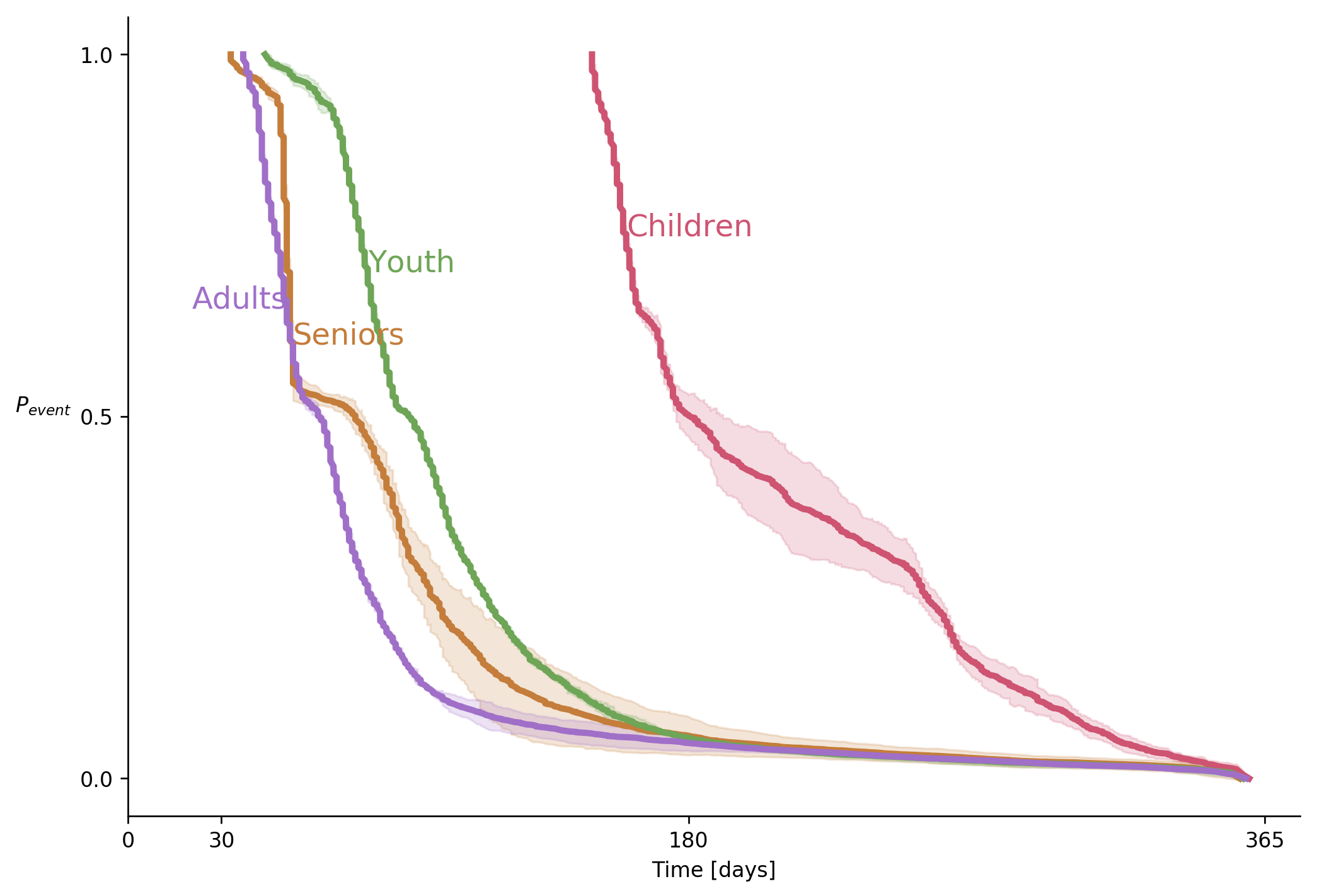

From time to time, people ask me for help with non-trivial data visualization tasks. A couple of weeks ago, a friend-of-a-friend-of-a-friend showed me a set of graphs with the following note:

Each row is a different use case. Each use case was tested on three separate occasions – columns 1,2,3. We hope to show that the lines in each row behave similarly, but that there are differences between the different rows.

Before looking at the graphs, note the last sentence in the above comment. Knowing what you want to show is an essential and not trivial part of a data visualization task. Specifying what is it precisely that you want to say is the first required task in any communication attempt, technical or not.

For the obvious reasons, I cannot share the original graphs that that person gave me. I managed to re-create the spirit of those graphs using a combination of randomly generated arrays.

Notice how the X- and Y- axes are aligned between all the subplots. Such alignment is a smart move that provides a shared scale and allows faster and more natural comparison between the curves. You should always try aligning your axes. If aligning isn’t possible, make sure that it is absolutely, 100%, clear that the scales are different. Slight differences are very confusing.

There are several small things that we can do to improve this graph. First, the identical legends in every subplot are a useless waste of ink and thus, of your viewers’ processing power. Since they are identical, these legends do nothing but distracting the viewer. Moreover, while I understand how a variable name such as event_prob appeared on a graph, showing such names outside technical teams is a bad practice. People who don’t share intimate knowledge with the underlying data will find human-readable labels easier to comprehend, making your message “stickier.”

Let’s improve the signal-to-noise ratio of this plot.

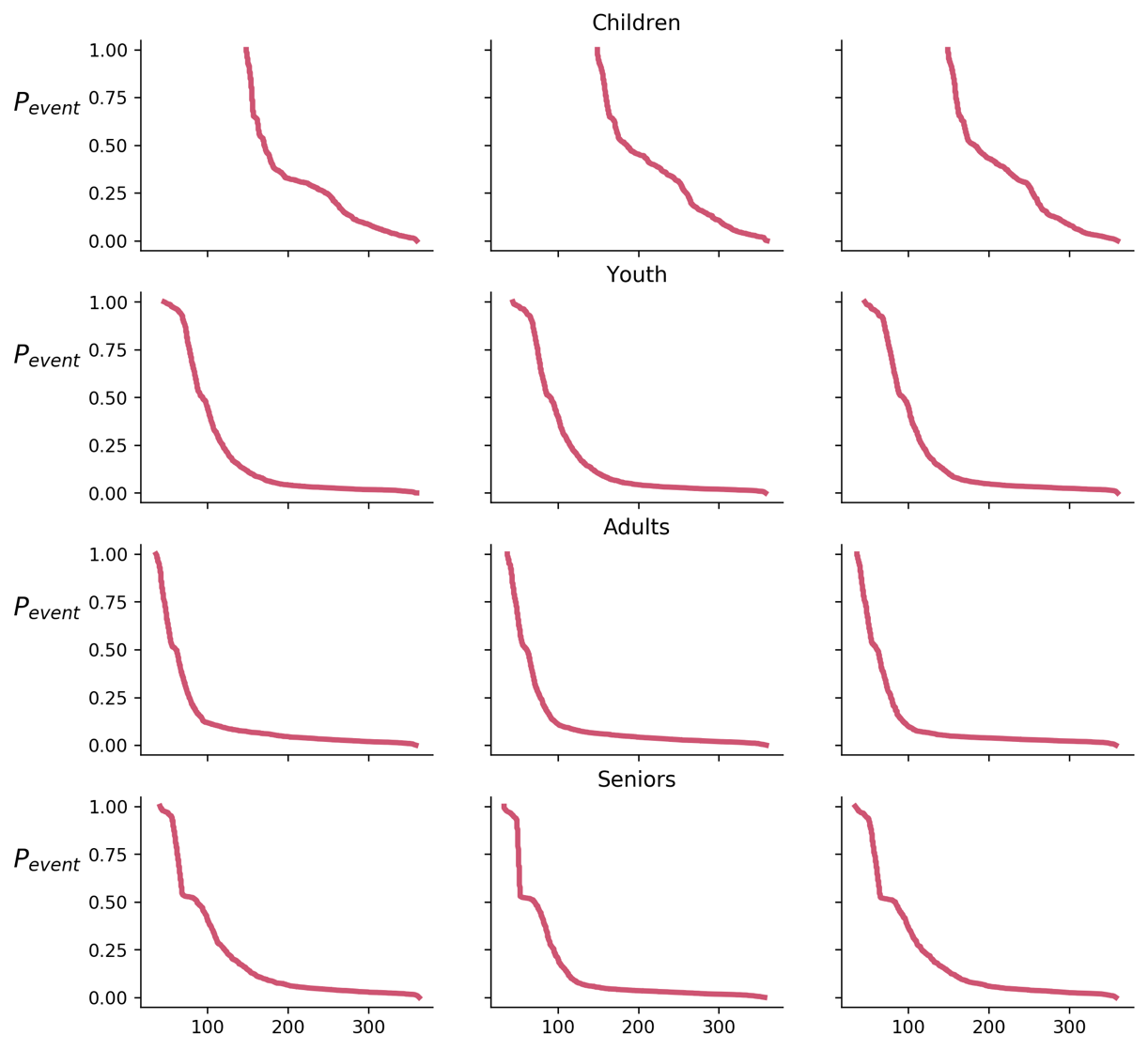

According to our task, each row is a different use case. Notice that I accompanied each row with a human-readable label. I didn’t use cryptic code such as group_001, age_0_10 or the such.

Now, let’s go back to the task specification. “We hope to show that the lines in each row behave similarly, but that there are differences between the separate rows.” Remember my advice to always use conclusions as graph titles? Let’s test how such a title will look like

Really? Is there a better way to justify the title? I claim that there is.

Let’s experiment a little bit. What will happen if we will plot all the lines on the same graph? By doing so, we might create a stronger emphasize of the similarities and the differences.

Not bad. The separate lines create some excessive noise, and the legend isn’t the best way to label multiple lines, so let’s improve the graph even further.

Note that meaningful ticks on the X-axis. The 30, 180, and 365-day marks provide useful anchors.

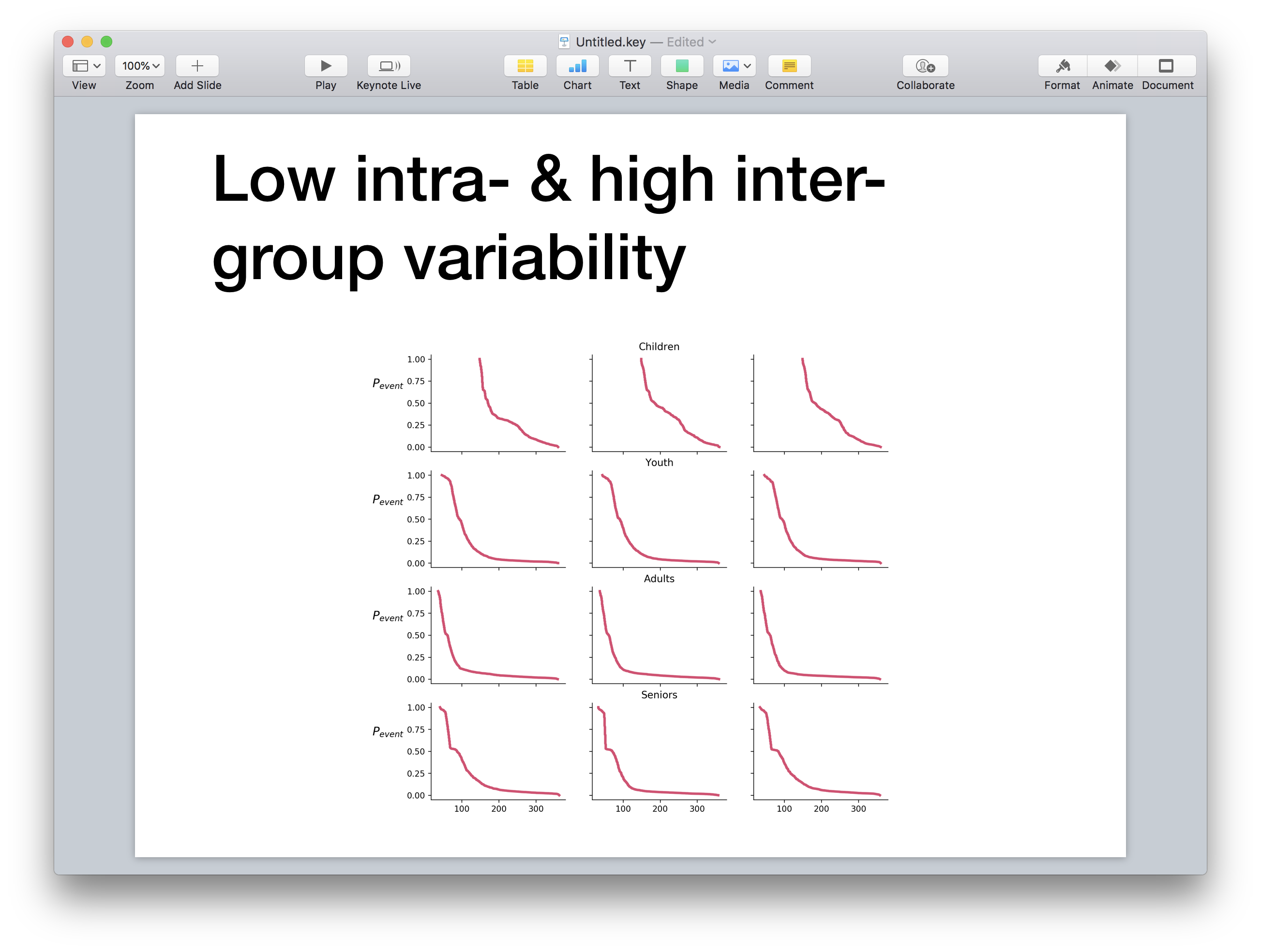

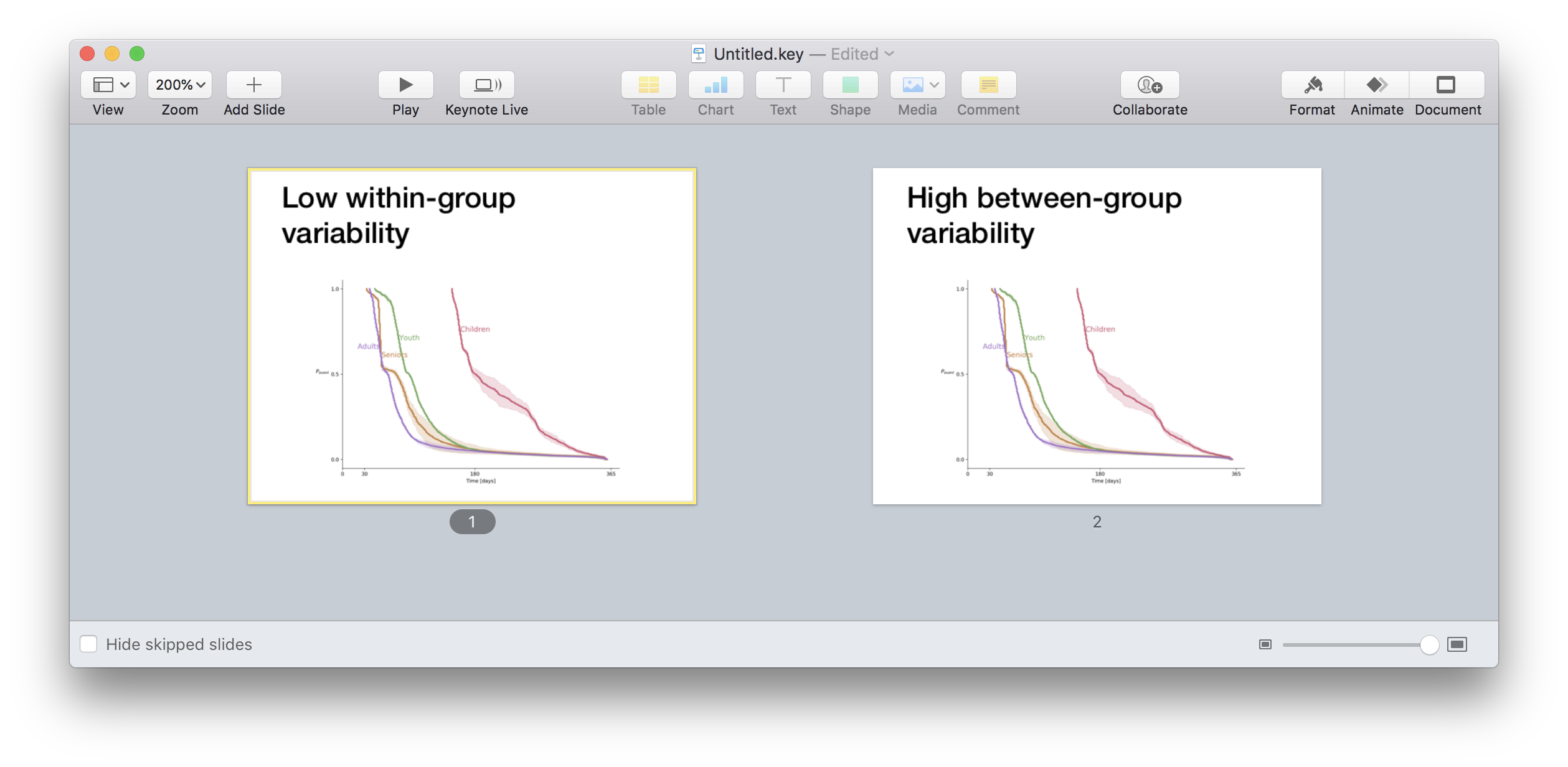

Now, let us go back to our title. “Low intra- and high inter- group variability” is, in fact, two conclusions. If you have ever read any text about technical presentations, you should remember the “one point per slide” rule. How do we solve this problem? In cases like these, I like to use the same graph in two different slides, one for each conclusion.

During a presentation, I would show this graph with the first conclusion as a title. I would talk about the implications of that conclusion. Next, I will say “wait! There is more”, will promote the slide and start talking about the second conclusion.

First, decide what is it that you want to say. Then ask whether your graph says what you want to say. Next, emphasize what you want to say, and finally, say what you want to say.

The case that you see in this post is a relatively easy one because it only compares four groups. What will happen if you will need to compare six, sixteen or sixty groups? I will try answering this question in one of my next posts

[gallery ids=”2190,2189” type=”rectangular” link=”none”]

From time to time, I give a lecture about most common mistakes in data visualization. In this lection, I say that not adding a graph’s conclusion as a title is an opportunity wasted

In one of these lectures, a fresh university graduate commented that in her University, she was told to never write a conclusion in a graph. According to to the logic she was tought, a scientist is only supposed to show the information, and let his or her peer scientists draw the conclusions by themselves. This sounds like a valid demand except that it is, in my non-humble opinion, wrong. To understand why is that, let’s review the arguments in favor of spelling out the conclusions.

We cannot “unlearn” how to read. If you show a piece of graphic for its aesthetic value, it is perfectly OK not to suggest any conclusions. However, most of the time, you will show a graph to persuade someone, to convince them that you did a good job, that your product is worth investing in, or that your opponent is ruining the world. You hope that your audience will reach the conclusion that you want them to reach, but you are not sure. Spelling out your conclusion ensures that the viewers use it as a starting point. In many cases, they will be too lazy to think of objections and will adopt your point of view. You don’t have to believe me on this one. The Nobel Prize winner Daniel Kahneman wrote a book about this phenomenon.

What if you want to hear genuine criticism? Use the same trick to ask for it. Write an open question instead of the conclusion to ensure everybody wakes up and start thinking critically.

Some people are not comfortable with the cynical way I suggest to exploit the limitations of the human mind. Those people might be right. For them, I have another reason, self-discipline. Coming up with a short, concise and descriptive title requires effort. This effort slows you down and ensures that you start thinking critically and asking questions. “What does this graph really tells?” “Is this the best way to demonstrate this conclusion?” “Is this conclusion relevant to the topic of my talk, is it worth the time?”. These are very important questions that someone has to ask you. Sure, having a professional and devoted reviewer on your prep team is great but unless you are a Fortune-500 CEO, you are preparing your presentations by yourself.

You will notice that my two arguments sound like a hack. They do not talk about the “pure science attitude”, and seem to be detached from the theoretical picture of the idealized scientific process. That is why, when that student objected to my suggestion, I admitted defeat. Being a data scientist, I want to feel good about my scientific practice. It took me a while but at some point, I realized that writing a conclusion as the sole title of a graph or a slide is a good scientific practice and not a compromise.

According to the great philosopher Karl Popper, a mandatory characteristic of any scientific theory is that they make claims that future observations might show to be false. Popper claims that without taking a risk of being proved wrong, a scientist misses the point [ref]. And what is the best way to make a clear, risky statement, if not spelling it out as a clear, non-ambiguous title of your graph?

To sum up, whenever you create a graph or a slide, think hard about what conclusion you want your audience to make out of it. Use this conclusion as your title. This will help you check yourself, and will help your fellow scientists assess your theory. And if a purist professor says you shouldn’t write your conclusions, tell him or her that the great Karl Popper thought otherwise.

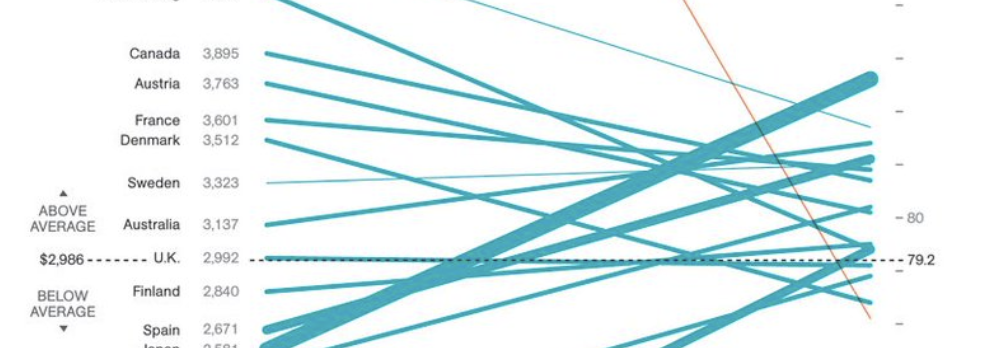

That fact that you can doesn’t mean that you should! I will say it once again.That fact that you can doesn’t mean that you should! Look at this slopegraph that was featured by “Information is Beautiful”

https://twitter.com/infobeautiful/status/994510514054139904

What does it say? What do the slopes mean? It’s a slopegraph, its slopes should have a meaning. Sure, you can draw a line between one point to another but can you see the problem here? In this nonsense graph, the viewer is invited to look at slopes of lines that connect dollars with years. The proverbial “apples and oranges” are not even close to the nonsense degree of this graph. Not even close.

Thispage attributes this graph to National Geographic, which makes me even sadder.

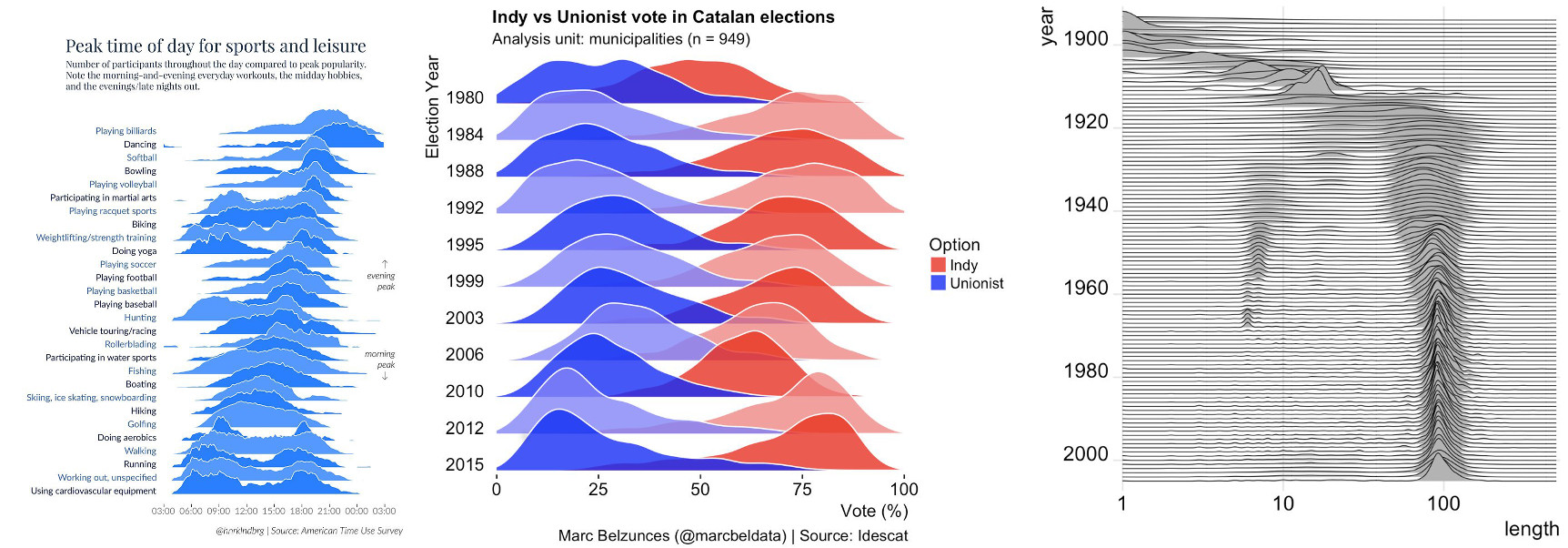

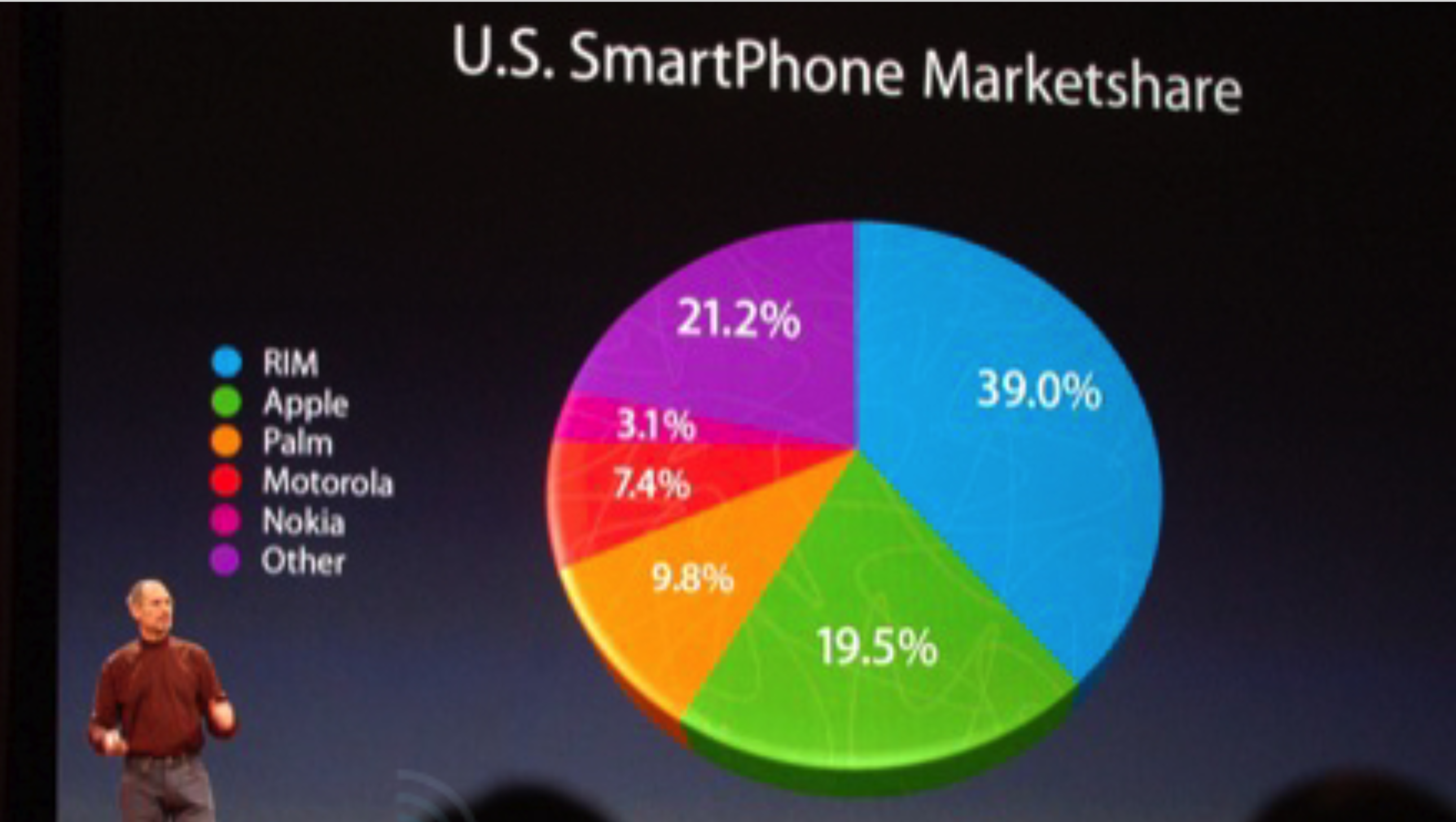

“There is only one thing worse than a pie chart. It’s a 3-D pie chart”. This is what I used to think for quite a long time. Recently, I have revised my attitude towards pie charts, mainly due to the works of Rober Kosara from Tableau. I am no so convinced that pie charts can be a good visualization choice, I even included a session “Pie charts as an alternative to bar charts” in my recent workshop.

What about three-dimensional graphs? I’m not talking about the situations where the data is intrinsically three-dimensional. Such situations lie within the consensus. I’m talking about adding a third dimension to graphs that can work in two dimensions. Like the example below that is taken from a 2017 post by Deven Wisner.

![Screenshot: a 3D pie chart with text "The only good thing about this pie chart is that it's starting to look more like [a] real pie"](/assets/img/2018/05/screen-shot-2018-05-28-at-14-41-43.png)

Of course, this is not a hypothetical example. We all remember how the late Steve Jobs tried to create a false impression of Apple market share

Having said all that, can you think of a legitimate case where adding the third dimension adds more value than distraction? I worked hard, and I finally found it.

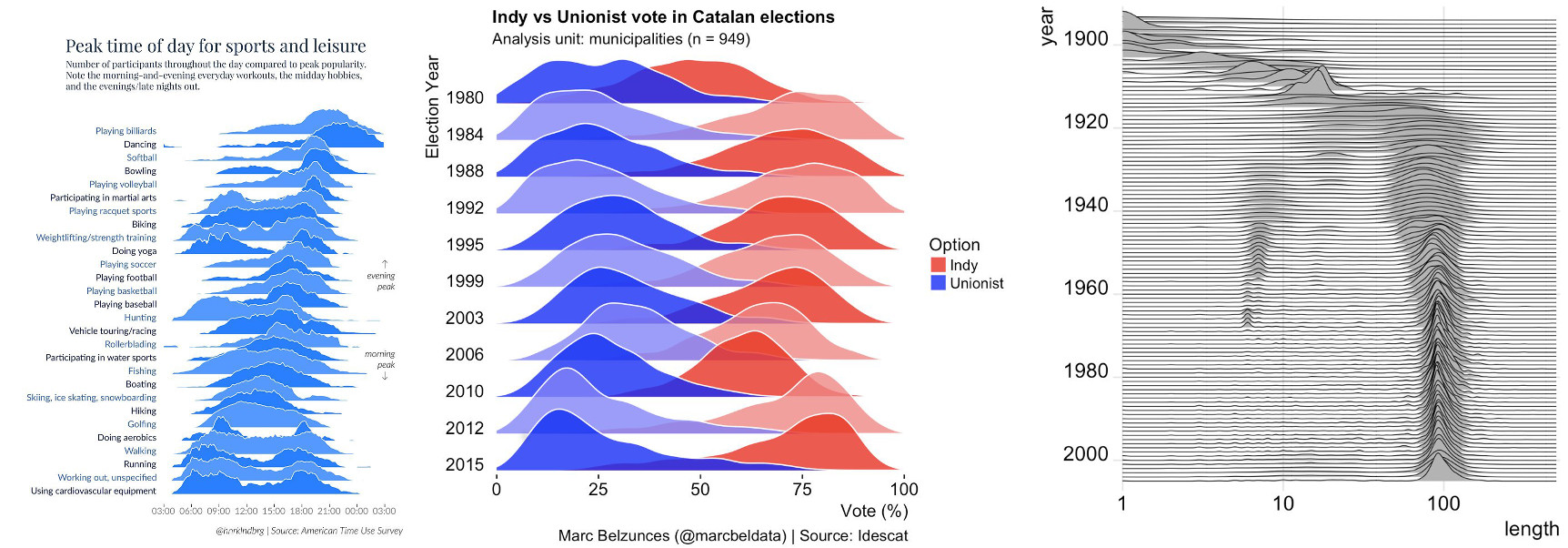

Take a look at the overlapping density plot (a.k.a “joy plot”).

If you think of this, joyplots are nothing more than 3-d line graphs done well. Most of the time, they provide information-rich data overview that also enables digging into fine details. I really like joyplots. I included one in my recent workshop. Many libraries now provide ready-to-use implementations of joyplots. This is a good thing to have. The only reservation that I have about those implementations is the fact that many of them, including my favorite seaborn, add meaningless colors to the curves. But this is a topic for another rant.

Today, I hosted a data visualization workshop, as a part of the workshop day adjacent to the fourth Israeli Data Science Summit. I really enjoyed this workshop, especially the follow-up questions. These questions are the reason I volunteer talking about data visualization every time I can. It may sound strange, but I learn a lot from the questions people ask me.

If you want to see the code, you may find it on GitHub. The slide deck is available on Slideshare

[slideshare id=99058016&doc=00abcthreemostcommonmistakes-180527153252]

I have been told that the data visualization workshop (“Data Visualization from default to outstanding. Test cases of tough data visualization”) is completely sold out. If you plan to attend this workshop, please check out the repository that I created for it [link]. In that repository, you will find a list of pre-requisites that you absolutely need to meet before the workshop. Also, it will be very helpful if you could fill this poll which will help me prepare for the workshop.

See you soon

So, the data visualization workshop is fully booked. The organizers told me to expect 40-50 attendees and I need some assistance. I am looking for a person who will be able to answer technical questions such as “I got a syntax error”, “why can’t I see this graph?”, “my graph has different colors”.

It’s a good opportunity to attend the workshop for free, to learn a lot of useful information, and to meet a lot of smart people.

It’s a win-win situation. Contact me now at boris@gorelik.net

What: Data Visualization from default to outstanding. Test cases of tough data visualization

**Why: ** You would never settle for default settings of a machine learning algorithm. Instead, you would tweak them to obtain optimal results. Similarly, you should never stop with the default results you receive from a data visualization framework. Sadly, most of you do.

When: May 27, 2018 (a day before the DataScience summit)/ 13:00 - 16:00

Where: Interdisciplinary Center (IDC) at Herzliya.

More info: here.

Timeline:

1. Theoretical introduction: three most common mistakes in data visualization (45 minutes)

2. Test case (LAB): Plotting several radically different time series on a single graph (45 minutes)

3. Test case (LAB): Bar chart as an effective alternative to a pie chart (45 minutes)

4. Test case (LAB): Pie chart as an effective alternative to a bar chart (45 minutes)

According to the conference organizers, the yearly Data Science Summit is the biggest data science event in Israel. This year, the conference will take place in Tel Aviv on Monday, May 28. One day before the main conference, there will be a workshop day, hosted at the Herzliya Interdisciplinary Center. I’m super excited to host one of the workshops, during the afternoon session. During this workshop, we will talk about the mistakes data scientist make while visualizing their data and the way to avoid them. We will also have some fun creating various charts, comparing the results, and trying to learn from each others’ mistakes.

Other factors being equal, what language would you choose for heavy numeric computations: Python or PHP? This is not a language war but a serious question. For me, the choice seems to be obvious: I would choose Python, and I’m not the only one. In this survey, for example, 45% of data scientist use Python, compared to 24% who use PHP. The two sets of data scientists aren’t mutually exclusive, but we do see the picture.

This is why I was very surprised when a colleague of mine suggested switching to PHP due to a three times faster performance in a benchmark. I was very surprised and intrigued. Especially, when I noticed that they used a heavy number crunching for the benchmark.

In that benchmark, the authors compute prime numbers using the following Python code

[code lang=”python”]

def get_primes7(n):

“””

standard optimized sieve algorithm to get a list of prime numbers

— this is the function to compare your functions against! —

“””

if n < 2:

return []

if n == 2:

return [2]

# do only odd numbers starting at 3

if sys.version_info.major <= 2:

s = range(3, n + 1, 2)

else: # Python 3

s = list(range(3, n + 1, 2))

# n0.5 simpler than math.sqr(n)

mroot = n ** 0.5

half = len(s)

i = 0

m = 3

while m <= mroot:

if s[i]:

j = (m * m - 3) // 2 # int div

s[j] = 0

while j =6, Returns a array of primes, 2 <= p < n “””

sieve = np.ones(n//3 + (n%6==2), dtype=np.bool)

sieve[0] = False

for i in range(int(n0.5)//3+1):

if sieve[i]:

k=3i+1|1

sieve[ ((kk)//3) ::2k] = False

sieve[(kk+4k-2k(i&1))//3::2k] = False

return np.r_[2,3,((3*np.nonzero(sieve)[0]+1)|1)]

[/code]

Did you notice the problem? The code above is a pure Python code. I can’t think of a good reason to use pure python code for computationally-intensive, time-sensitive tasks. When you need to crunch numbers with Python, and when the computational time is even remotely important, you will most certainly use tools that were specifically optimized for such tasks. One of the most important such tools is numpy, in which the most important loops are implemented in C++ or in Fortran. Many other packages, such as Pandas, scipy, sklearn, and others rely on numpy or other form of speed optimization.

The following snippet uses numpy to perform the same computation as the first one.

[code lang=”python”]

def numpy_primes(n):

# http://stackoverflow.com/questions/2068372/fastest-way-to-list-all-primes-below-n-in-python/3035188#3035188

“”” Input n>=6, Returns a array of primes, 2 <= p < n “””

sieve = np.ones(n//3 + (n%6==2), dtype=np.bool)

sieve[0] = False

for i in range(int(n*0.5)//3+1):

if sieve[i]:

k=3i+1|1

sieve[ ((kk)//3) ::2k] = False

sieve[(kk+4k-2k(i&1))//3::2k] = False

return np.r_[2,3,((3np.nonzero(sieve)[0]+1)|1)]

[/code]

On my computer, the timings to generate primes smaller than 10,000,000 is 1.97 seconds for the pure Python implementation, and 21.4 milliseconds for the Numpy version. The numpy version is 92 times faster!

**What does that mean? **

Whoever owns the metric owns the results. Never trust a benchmark result before you understand how the benchmark was performed, and before making sure the benchmark was performed under the conditions that are relevant to you and your problem.

What would you do, if someone left a “Pile of Poo” emoji as a reaction to your photo in your team Slack channel?

This is exactly what happened to me a couple of days ago, when Sirin, my team lead, posted a picture of me talking to the Barcelona Machine Learning Meetup Group about data visualization.

Did I feel offended? Not at all. It was pretty funny, actually. To understand why, let’s talk about the importance of context in communication, especially in a distributed team.

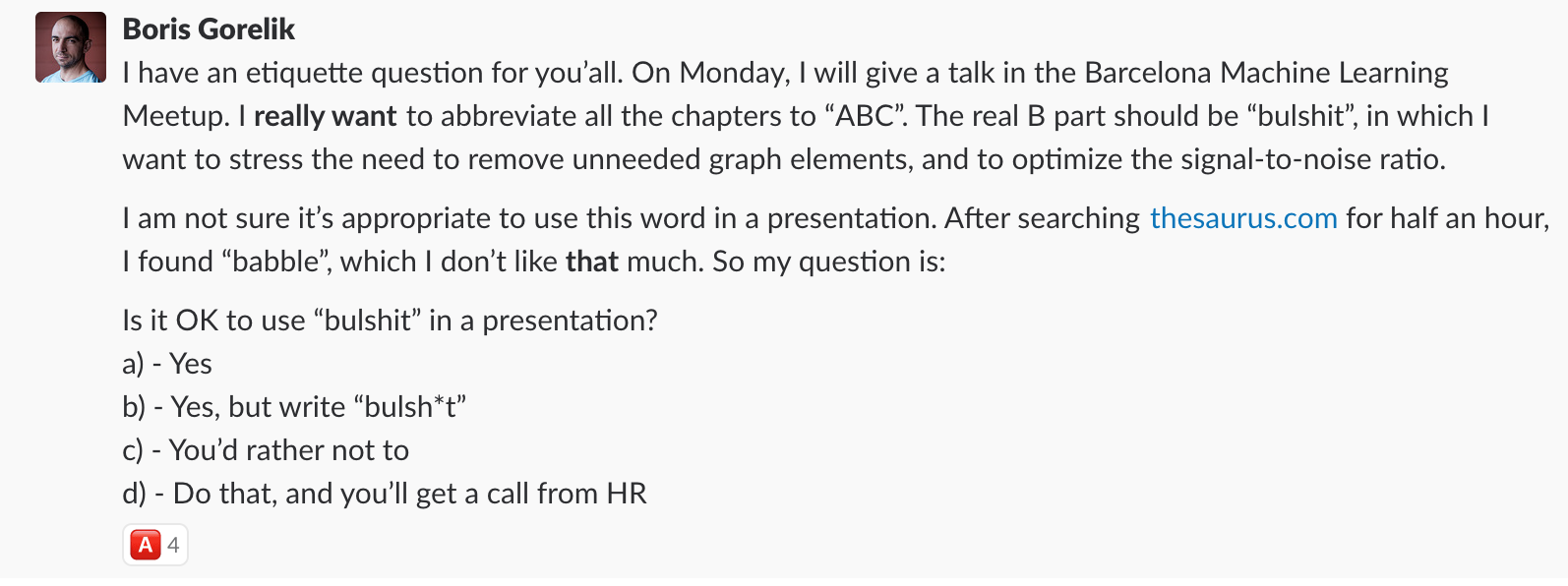

My Barcelona talk is titled “Three most common mistakes in data visualization and how to avoid them”. During the preparation, I noticed that the first mistake is about keeping the wrong attitude, and the third one is about not writing conclusions. I noticed the “A”, and “C”, and decided to abbreviate the talk as “ABC”. Now, I had to find the right word for the “B” chapter. The second point in my talk deals with low signal-to-noise ratio. How do you summarize signal-to-noise ratio using a word that starts with “B”? My best option was “bullshit”, as a reference to “noise” – the useless pieces of information that appear in so many graphs. I was so happy about “bullshit,” but I wasn’t sure it was culturally acceptable to use this word in a presentation. After fruitless searches for a more appropriate alternative, I decided to ask my colleagues.

All the responders agreed that using bullshit in a presentation was OK. Martin, the head of Data division at Automattic, provided the most elaborate answer.

I was excited that my neat idea was appropriate, so I went with my plan:

Understandably, the majority of the data community at Automattic became involved in this presentation. That is why, when Sirin posted a photo of me giving that presentation, it was only natural that one of them responded with a pile of poo emoji. How nice and cute! 💩

This bullshit story is not the only example of something said about me (if you can call an emoji “saying”) that sounded very bad to the unknowing person, but was in fact very correct and positive. I have a couple of more examples that may be even funnier than this one but require more elaborate and boring explanations.

However, the lesson is clear. Next time you hear someone saying something unflattering about someone else, don’t jump to conclusions. Think about bullshit, and assume the best intentions.

Yesterday, I talked in front of the Barcelona Data Science and Machine Learning Meetup about the most common mistakes in data visualization. I enjoyed talking with the local community very much. Judging by the feedback I received during and after the talk, they too, enjoyed my presentation. I uploaded my slides to Slideshare.

Enjoy!