From time to time, I get emails from people who seek advice in their career paths. If I have time, I write them an extended reply and if they agree, I publish the questions and my replies here, in my blog. Here’s one such email exchange. All similar pieces of advice, as well as other rants about a career in data science, can be found here.

“Hi Boris :)

My name is XXXXX. I came across your blog while searching for people with a mix of pharmacy and data science skillsets. Your blog has been so informative to me so far but I was compelled to write to you to ask for your advice.

I am a clinical pharmacist by background but decided to leave the clinical pharmacy to pursue public health. Whilst doing my MPH, I fell in love with epidemiology and statistics and am now doing a Ph.D. in biostatistics. Your blog has made me feel very happy that I made this career move <…> I feel better about my decision to leave the pharmacy and pursue a quant Ph.D. I have gone from pharmacy, to internships at as I wanted to pursue a career in and now I am thinking of data science in the tech industry…my background is a bit confusing!"

In the past, I also felt that the pharmacy degree was confusing many potential employers, and since I wanted to leave the bio/pharma world and move to “pure data” positions, I omitted the B.Pharm title & studies from my CV. Ten years ago, the salaries in the bio sector, here in Israel, were much lower than the salaries in the “high tech” field. I think that today this situation is more or less normalized and that the people got used to the fact that a typical “data scientist” can have a very wide range of degrees.

“I was just wondering if I could get your opinion on the three questions I have.

1. I work part-time as a clinical pharmacist to not forget my clinical skills. What do you think about the future of the pharmacy career overall?”

My last shift as a pharmacist

My last shift as a pharmacist

This is a huge question and I don’t have answers to it. Moreover, the answer depends heavily on legal regulations in the given country. I say that if you enjoy treating people, and can afford this time, why not? I, personally, was a very lousy pharmacist :-) so I was very happy to leave the pharmacy.

“I am wondering if I should keep up my pharmacist title or pursue data science full-time.”

Again, it depends. For many years, I didn’t have my pharmacy title in my CV because it felt unrelated to what I was doing. It was also a nice icebreaker to tell people with whom I worked “by the way, I’m a pharmacist” and it was fun to see their reactions. If I were you, I would ask two-three HR people or people who recruit employees what they think. Different countries may behave differently.

“2. At what point can someone call themselves a data scientist?”

In my opinion, as long as you are comfortable enough to call yourself a data scientist, you are good to go. Note that unlike many people who got their data science “title” after taking some online courses, you already have a very strong theoretical base. Not only are your Master’s and the future Ph.D. degree relevant to data science, but they also give you strong and unique advantages.

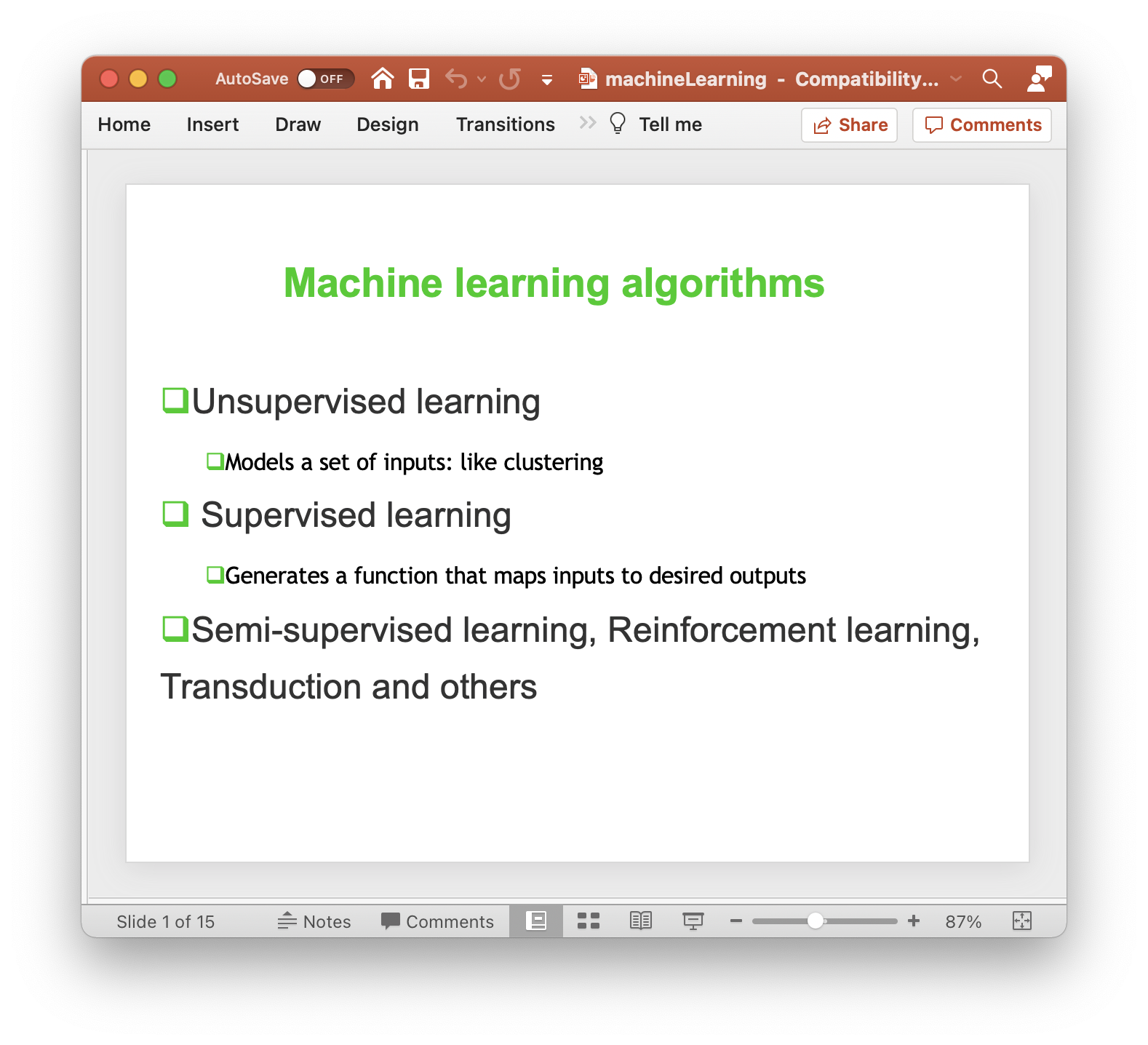

“I am looking at DS jobs at large tech companies. I am not sure how qualified and experienced I have to be for these jobs. I code in R using regression, clustering and time series methods and I am quite fluent in this language. I have just started to learn ML algorithms. I have a basic foundation in Python and SQL. I use Tableau for visualization and love communicating my research at any opportunity I get. I was wondering…how good do I have to be able to apply to DS jobs? What are the methods that data scientists use mostly? Would I be able to learn on the job?”

It sounds like a good combination of techniques. I am not recruiting but if I would, I would definitely like this list of skills. Personally, I don’t like R too much and prefer Python. But once you program one language, moving to another one is a doable task. As to what methods do data scientists use mostly, this hugely depends on your job. Most of my time, I clean data and write wrapper functions around known algorithms. The task that I have been facing during my professional life required regression, classification, network analysis. I never did real deep learning stuff, but I know people who only do deep learning for image and sound analysis. Also, in many cases, the data science part takes only 10% of your time because the “customer” doesn’t care about an algorithm, they want a solution. See this post for a nice example.

“3. If you had the opportunity to start your career again, say you were in your early twenties, what would you study and why? What advice would you have for your younger self? I would be so keen to hear what you think.”

It’s a philosophical task which I never like doing. What is done is done. The fact that I am a pretty successful data scientist may mean that I took the right decisions or that I was super lucky.

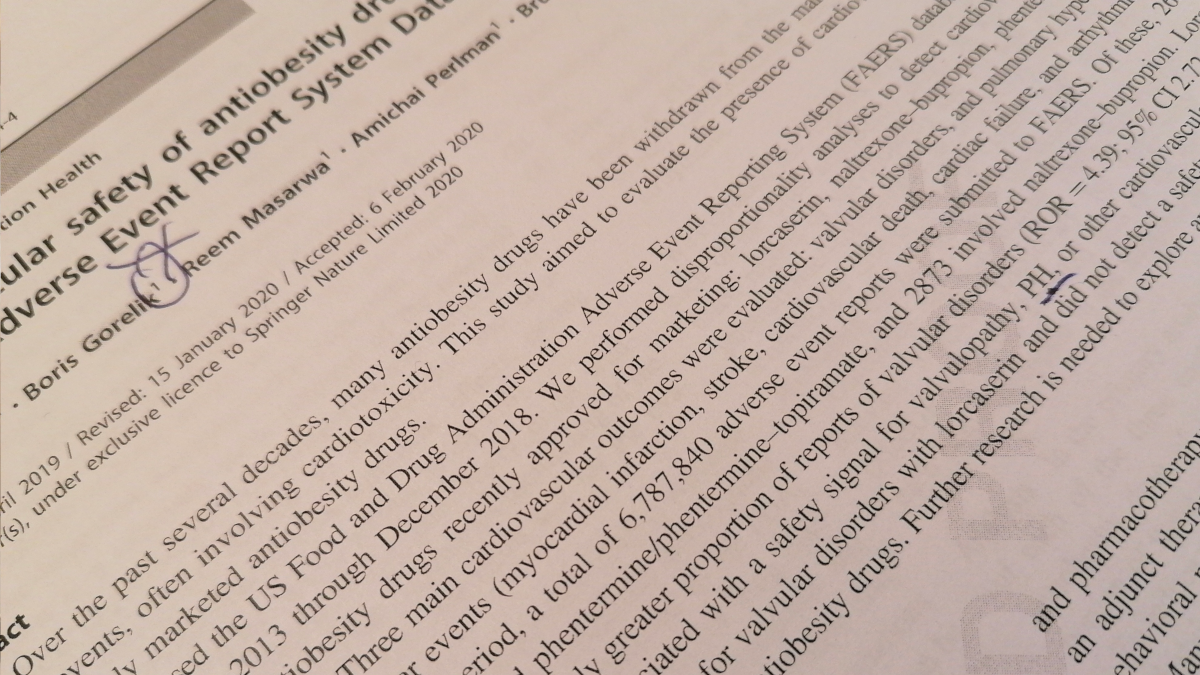

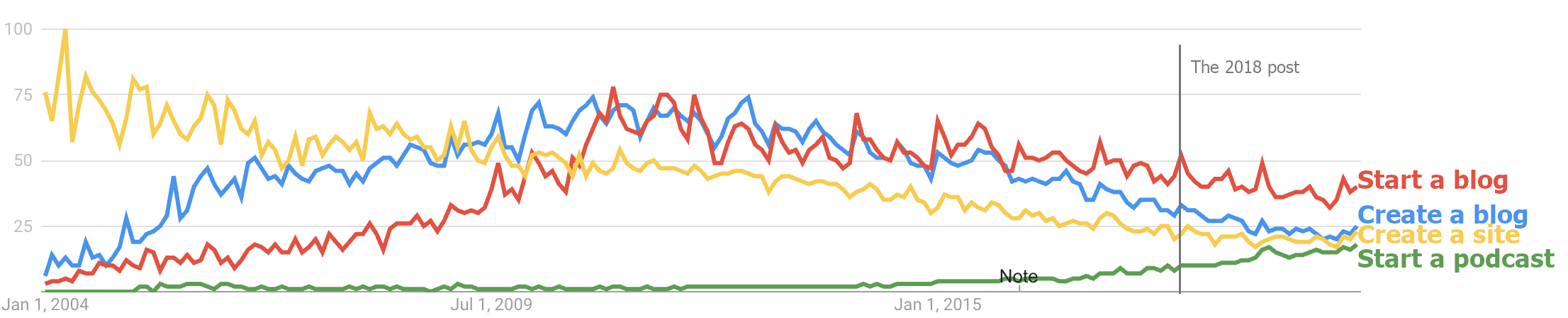

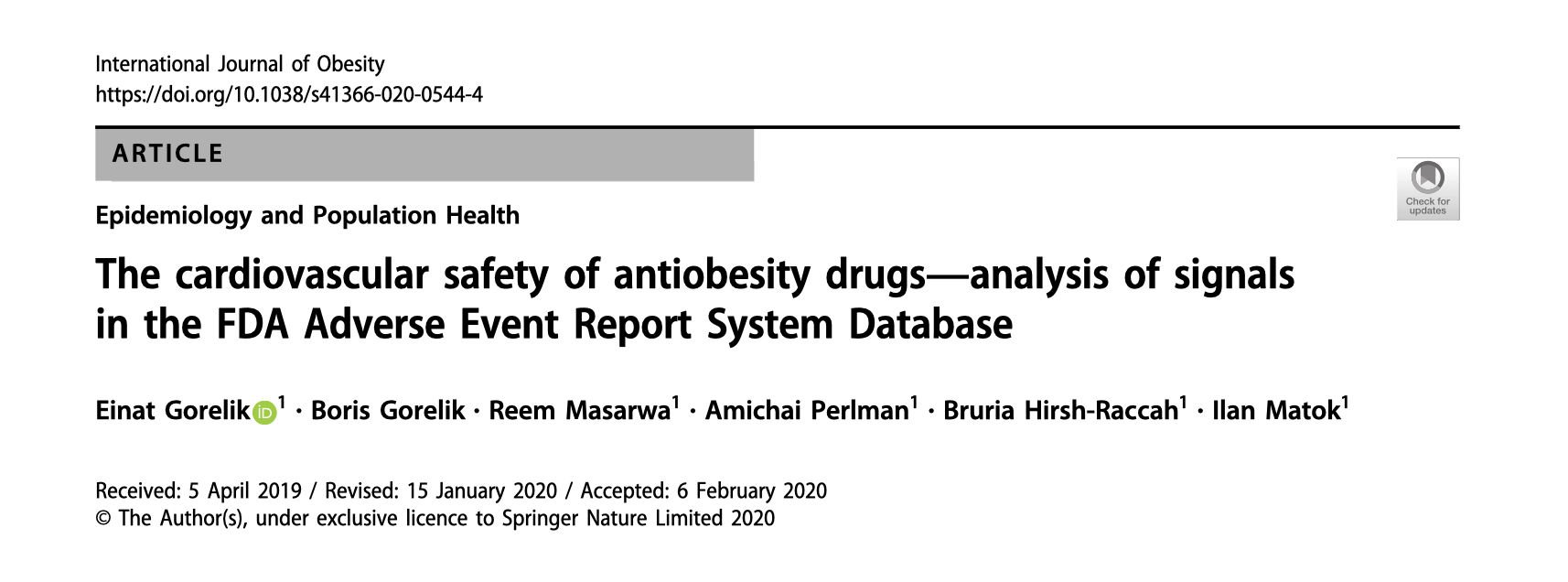

Taken from

Taken from  Taken from

Taken from

My last shift as a pharmacist

My last shift as a pharmacist