Any dispute that is for the sake of Heaven is destined to endure; one that is not for the sake of Heaven is not destined to endure

Chapters of the Fathers 5:27

One day, I had an intense argument with a colleague at my previous place of work, Automattic. Since most of the communication in Automattic happens in internal blogs that are visible to the entire company, this was a public dispute. In a matter of a couple of hours, some people contacted me privately on Slack. They told me that the message exchange sounded aggressive, both from my side and from the side of my counterpart. I didn’t feel that way. In this post, I want to explain why it is OK to have a loud argument with your co-workers.

How it all began?

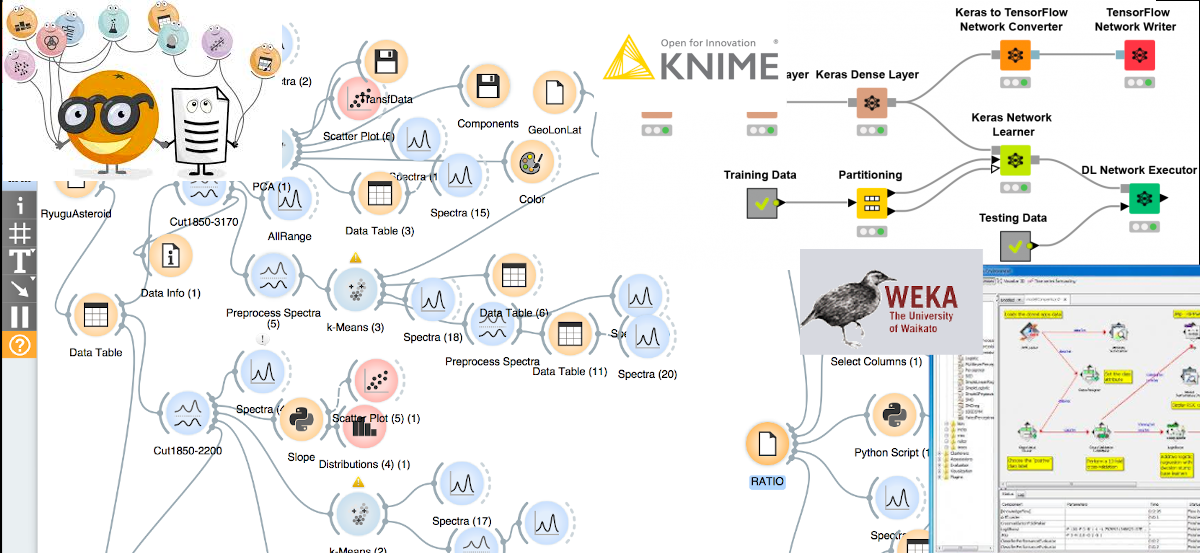

I’m a data scientist and algorithm developer. I like doing data science and developing algorithms. Sometimes, to be better at my job, I need to show my work to my colleagues. In a “regular” company, I would ask my colleagues to step into my office and play with my models. Automattic isn’t a “regular” company. At Automattic, people from more than sixty countries from in every possible time zone. So, I wanted to start a server that will be visible by everyone in the company (and only by them), that will have access to the relevant data, and that will be able to run any software I install on it.

X is a system administrator. He likes administrating the systems that serve more than 2000,000,000 unique visitors in the US alone. To be good at his job, X needs to make sure no bad things happen to the systems. That’s why when X saw my request for the new setup (made on a company-visible blog page), his response was, more or less, “Please tell me why do you think you need this, and why can’t you manage with what you already have.”

Frankly, I was furious. Usually, they tell you to count to ten before answering to someone who made you angry. Instead, I went to my mother-in-law’s birthday party, and then I wrote an answer (again, in a company-visible blog). The answer was, more or less, “because I know what I’m doing.” For which, X replied, more or less, “I know what I do too.”

How it got resolved?

At this point, I started realizing that X is not expected to jeopardize his professional reputation for the sake of my professional aspirations. It was true that I wanted to test a new algorithm that will bring a lot of value to the company for which I work. It is also true that X doesn’t resent to comply with every developers’ request out of caprice. His job is to keep the entire system working. Coincidentally, X contacted me over Slack, so I took the opportunity to apologize for something that sounded as aggression from my side. I was pleased to hear that X didn’t notice any hostility, so we were good.

What eventually happened and was the dispute avoidable?

I don’t know whether it was possible to achieve the same or a better result without the loud argument. I admit: I was angry when I wrote some of the things that I wrote. However, I wasn’t mad at X as a person. I was angry because I thought I knew what was best for the company, and someone interfered with my plans.

I assume that X was angry when he wrote some of the things he wrote. I also believe that he wasn’t angry at me as a person but because he knew what was best for the company, and someone tried to interfere with his plans.

I’m sure though that it was this argument that enabled us to define the main “pain” points for both sides of the dispute. As long as the dispute was about ideas, not personas, and as long as the dispute’s goal was for the sake of the common good, it was worth it. To my current and future colleagues: if you hear me arguing loudly, please know that this is a “dispute that is for the sake of Heaven [that] is destined to endure.”

Featured image: Source: http://mimiandeunice.com/; Bees image: Photo by Flickr user silangel, modified. Under the CC-BY-NC license.

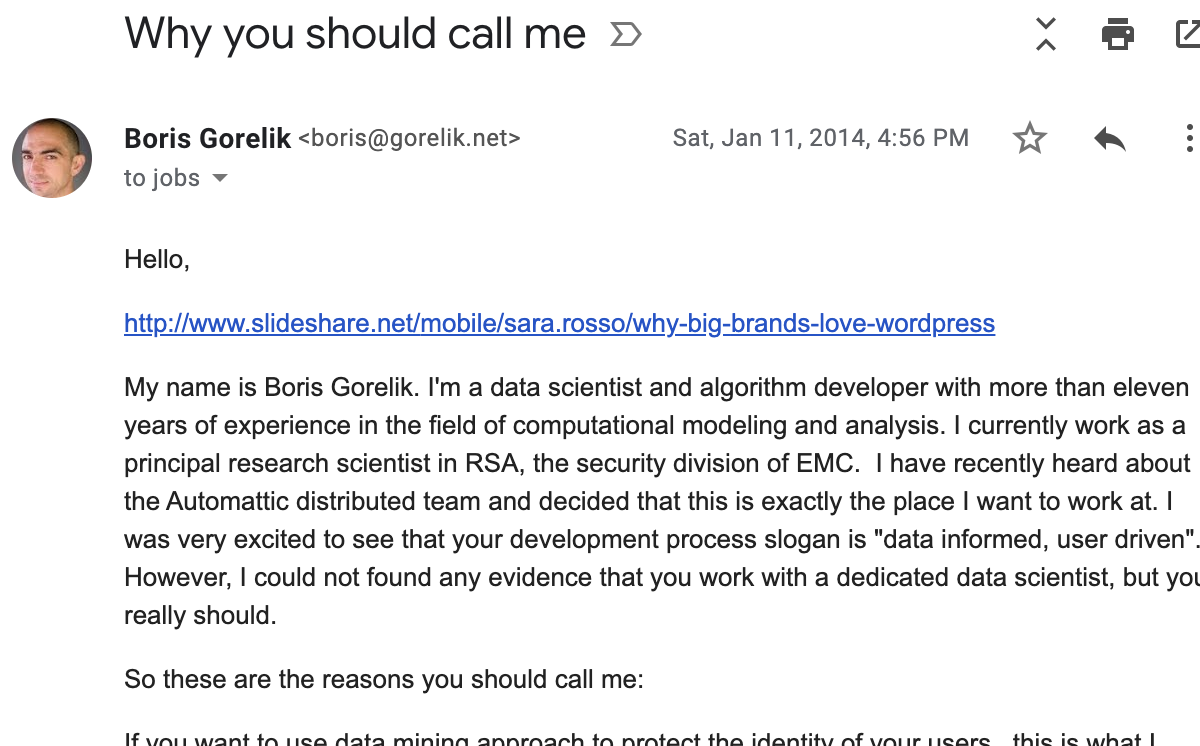

The email is pretty long.

I even forgot to remove a link that I planned to read BEFORE sending that email.

The email is pretty long.

I even forgot to remove a link that I planned to read BEFORE sending that email. 2018 Grand Meetup.

2018 Grand Meetup.